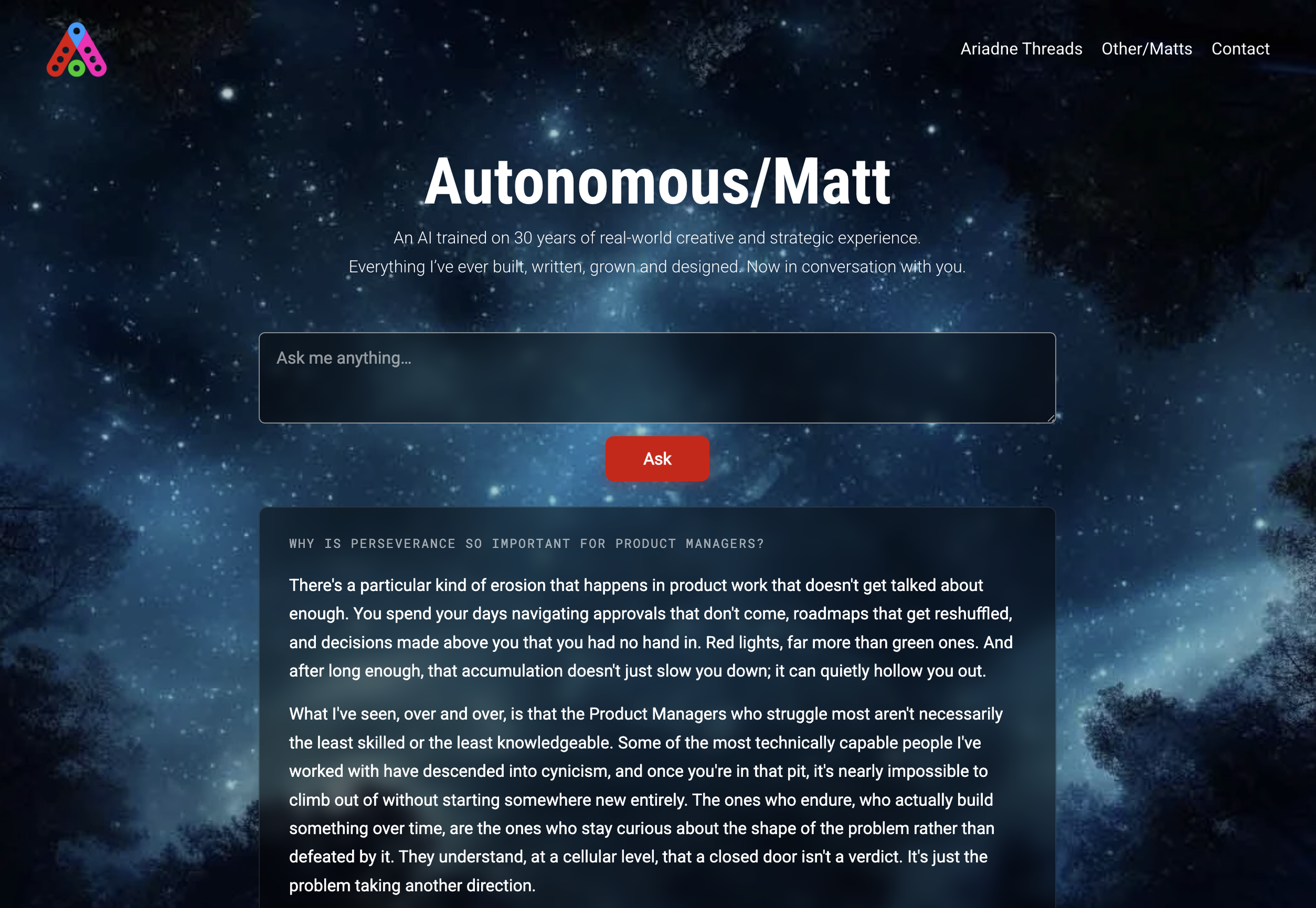

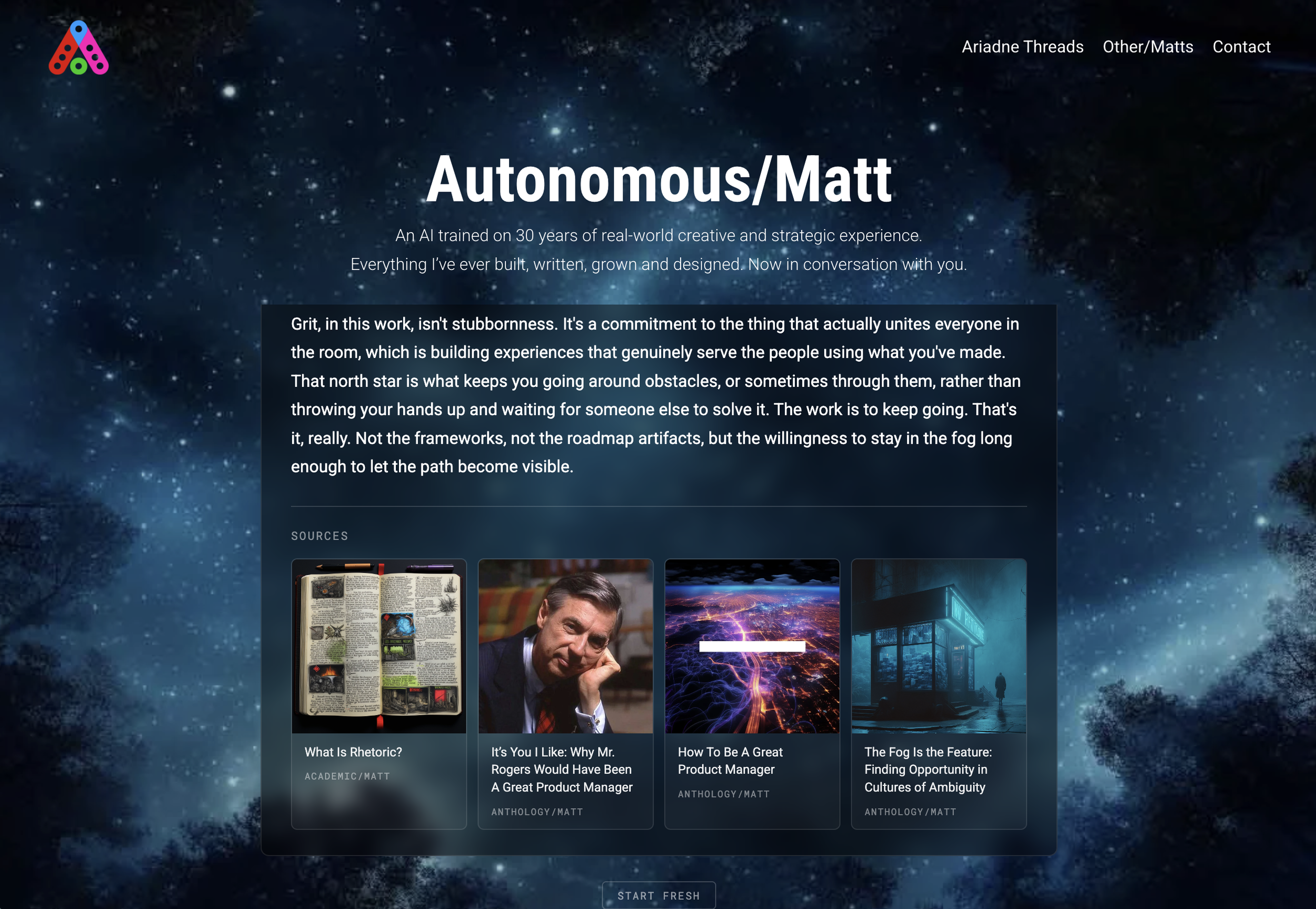

What Working With an AI Assistant Taught Me About My Own Voice

For a year, my chatbot at autonomousmatt.com did not work. It was a thing I had built quickly the previous spring out of GPT and a lookup table, and at the time it had felt clever, but the cleverness had hardened into a brittle keyword game. You could ask it about Wagner and it would find Wagner. You could ask it about Pulp Fiction and it would find Pulp Fiction. You could ask it about anything I had not anticipated and it would shrug. The whole system was 3,900 lines of hand-authored mappings between phrases and articles, with silent collision bugs I had never caught because nobody had ever pushed it hard enough to surface them. It was, in essence, a search engine pretending to be a conversation. People came to talk to me, and instead got a stilted librarian.

I wanted to rebuild it. I am not a developer. What I am is someone who has spent thirty years as a creative practitioner with strong opinions about how things should feel, and who has, in recent years, become genuinely interested in what generative tools can do when you treat them as collaborators rather than typewriters. So I sat down with Claude, told it the system was broken, and proposed we build it again from scratch.

What followed across several sessions was less like writing software and more like directing a film. Decisions about what the thing should be. Decisions about what it should not be. Choices that propagated through the rest of the structure in ways neither of us could fully predict. We replaced the entire foundation, swapping the keyword index for a semantic one, the kind that understands meaning rather than matching letters. The system now embeds my writing into a vector space where conceptually adjacent things sit near each other, and when someone asks a question, it finds the parts of the corpus closest in meaning, not in vocabulary. This sounds technical. It is not, particularly. It means the bot can hold a conversation about grief in cinema even if I never used the word grief and the questioner never used the word cinema. The interesting part was not the architecture. The interesting part was what working this way taught me.

Specificity is the whole game. When I told Claude the bot should sound like me, that meant almost nothing. When I sat with what it produced and said no, this paragraph hedges, this one explains too much, this one ends with a closing gesture I would never write, the model got sharper. Vague direction yields vague output. Particular direction yields particular output. The same is true of taste in any medium, but it surfaces with unusual clarity when the collaborator is a model that will, given enough license, smooth everything into a competent average. The work is in noticing what makes your work yours, and saying it out loud.

I also learned that you cannot speculate your way to a good system. You have to live with what you have built. The first version went live, and people started asking it things, and the questions revealed the gaps. The bot answered cleanly when someone typed Zulu Dawn but stumbled when they typed What has Matt written about Zulu Dawn. The same article surfaced twice in source cards because it lived on two of my domains. The bot did not catch the typo in Danver in the Dark, even though any friend reading the question would have known what was meant. Each of these became a small fix. Each fix was a few lines of code I did not have to write, because I could describe the problem in ordinary language and Claude would write the code and explain what it did. Over a single day I added query reformulation, conservative typo correction, cross-domain deduplication, retrieval visibility for debugging, conversation persistence, and a small fleet of interface refinements. None of these were planned. Each was a response to something I noticed.

The deeper shift is in how I think about my own role. I used to imagine that to make a thing like this I would need to learn to code. I no longer think that. Coding is a skill the model has. What the model does not have is judgment about what to make and how it should feel. That is mine, and the work is in articulating it well enough for the collaboration to function. This is closer to direction than to engineering. Closer to editing than to writing. The model offers, I respond. The model proposes, I refine. Eventually a thing exists that nobody could have made alone, including the model, including me.

There is something quietly strange about all of this. The bot speaks in my voice now. People who know me have told me it sounds like me. It draws from things I wrote thirty years ago and things I wrote last week, and assembles them into responses I could plausibly have given if asked the questions myself. It is not me. It is not pretending to be me. It is a kind of editorial instrument trained on my own writing, an interface to the accumulated body of the work, and the line between the work and the person who made it has become, in a small way, blurred. I am still figuring out what I think about that. The figuring out is part of what the project is for.

We are starting on something adjacent now. A visual inspiration tool drawing on hundreds of sketchbook pages I have collected over the years, the same architectural idea applied to images rather than words. The same loop of building, living with it, fixing what surfaces. The same conversation between someone who knows what he wants and a collaborator who can build it as fast as it can be described. Whatever it ends up being, it will be different from what I imagined when we started. You arrive somewhere you did not know existed because you let the journey rearrange the destination.

Latest Articles